松島研究室では、大規模データ分析のための機械学習基盤の研究を行っています。

In today’s society, a wide variety of data such as data on urban infrastructure and the global environment, medical and health related data, marketing data, data on materials and their physical properties, are generated every day. Analysis and utilization of these large-scale data are indispensable for the development of fundamental information technologies for new social systems and services. In our laboratory, we conduct research on”statistical learning theory”, the fundamental theory of machine learning, “data mining”, its practical application, and development of algorithms for large-scale data that support both aspects of machine learning.

Research on Learning Schemes

機械学習の最適化を図るアルゴリズムの研究開発を行っています。

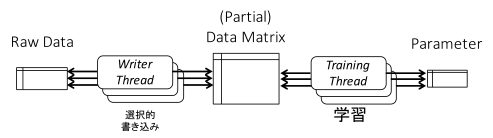

Dual Cached Loops

Dual Cached Loops(DCL)is an optimization scheme for machine learning methods by operating two threads asynchronously. One type of threads called Writer thread sequentially accesses to hard disk and repeatedly writes data into RAM. Another type of threads called Training Thread continuously updates parameters without being yielded by other operations. We revealed that this scheme allows efficient processing of data that exceed memory capacity and tera-bytes scale data can be dealt with in one machine.

Distributed Stochastic Optimization based on double separability

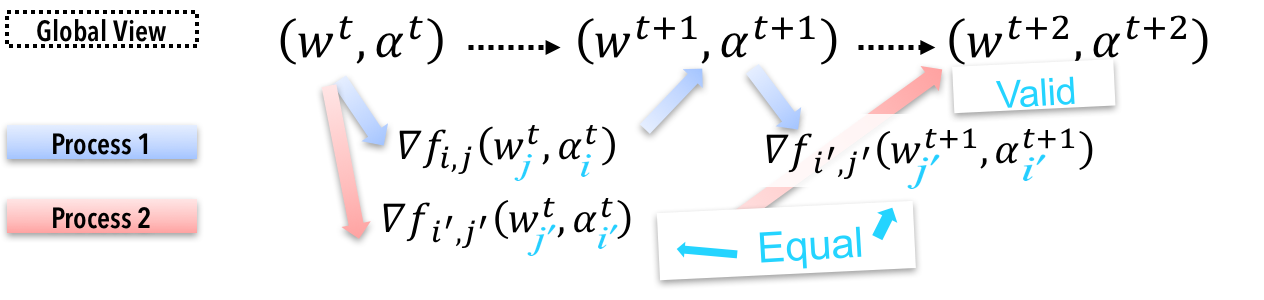

Stochastic optimization is often used in case one deals regularized risk minimization problem (RERM) with large-scale data such that one machine can not deal with it. In such cases that stochastic optimization is conducted in a distributed environment, parameters have to be synchronized frequently and this is the bottleneck of computation time.

We established Distributed Stochastic Optimization (DSO) method that deals with the equivalent saddle-point-problem to RERM and prevents the number of synchronization.

Data-driven Machine Learning

機械学習が関わるデータ分析は、タスク志向型とデータ駆動型の2つに大別されます。

タスク志向型では、解決すべきタスクに基づいてデータを収集・整形し、分析基盤を通じて予測器を実装します。一方のデータ駆動型分析は、複雑なデータの全体像を把握し、知識を発見・可視化するものです。

当研究室ではデータ駆動型機械学習に着目し、交通データやウェブマーケティングデータを用いたデータマイニングの研究に取り組んでいます。また、非同期的スキームを用いたデータ駆動型の大規模データ分析基盤の構築を目指す研究を行っています。